Forbidden Vision: How One Developer Fixed AI's Biggest Face Problem

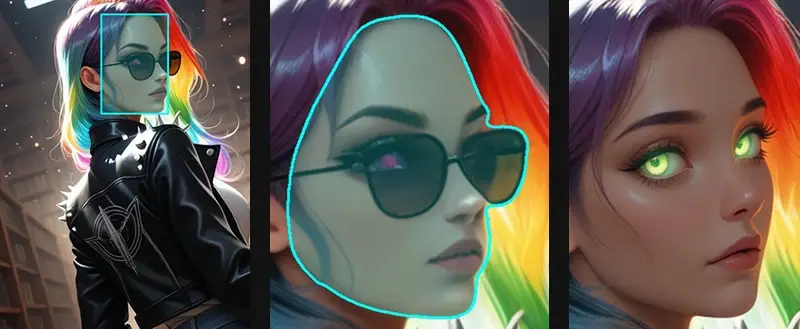

The face is broken. A distorted nose. A melting jawline. Eyes that point in slightly different directions. Masks that bleed into the wrong places. It doesn't matter how good your prompt is or how many times you regenerate. Faces, especially at unusual angles, have always been the quiet weak point that AI image generation hasn't been able to fully crack.

A member of the ComfyUI community named luxdelux7 kept hitting that wall. And instead of working around it or waiting for someone else to solve it, he built the solution himself. The result is called Forbidden Vision.

What "Forbidden Vision" Actually Is?

Forbidden Vision is described by its creator as "a complete ComfyUI enhancement suite" but that undersells what's actually going on under the hood. Most tools in this space are built on general-purpose models. Forbidden Vision ships three models trained from scratch on hand-curated data, built specifically for these tasks. That distinction matters enormously. General models make compromises across a wide range of use cases. These models were built for one job and one job only: fixing faces in AI-generated images, reliably, across every scenario that tends to break everything else.

The three pillars of the suite are a Face Detection and Segmentation system, a Neural Color Corrector, and a multi-pass generation workflow called the Fixer and Refiner pipeline.

Custom-Trained Models, Not Repurposed Ones

The Detection and Segmentation model maintains consistency across real photos, anime, and NSFW content, and handles extreme poses and heavy occlusion, the exact cases that cause the most visible failures in standard tools. This isn't a small improvement over the status quo. Extreme poses and occlusion (where part of the face is obscured or cut off by the frame) are notoriously difficult edge cases that most existing pipelines simply fall apart on. The fact that this model was trained specifically on these scenarios, using hand-curated data, means it's actually seen the problem it's being asked to solve.

The second model, the Neural Corrector, handles something that is typically left entirely to the user: it analyzes and automatically fixes exposure, black levels, and color. This kind of correction is usually done manually, by eye, through a series of adjustments that varies from image to image. Automating it through a learned model rather than a set of static rules is a meaningful step forward.

The Fixer and Refiner Workflow

The genius of Forbidden Vision is not just in the individual models but in how they work together as a pipeline. The Refiner prepares the image, correcting tone and color, and then hands it off to the Fixer, which can operate at different strengths depending on what the face actually needs. A subtle denoise pass at low strength can clean up minor artifacts without altering the character of the face. Pushing the strength higher gives the model more latitude to reshape and reconstruct a face that's significantly broken. This graduated approach gives users real control over how aggressively they want the correction to work, rather than applying a one-size-fits-all fix.

Built by a Community Member, for the Community

luxdelux7 describes himself as "an independent developer and AI creative toolmaker" who builds ComfyUI tools and trains models "with the goal of creating professional-grade, open-source alternatives to complex workflows." That framing is worth taking seriously. Forbidden Vision is not a thin wrapper around existing infrastructure. It required training models from scratch, curating datasets by hand, and iterating on a complete workflow architecture. That is a substantial amount of work for an independent developer to take on.

When he shared it with the community, the response was immediate: 288 upvotes on the subreddit within days. That kind of reception reflects something beyond appreciation for a useful tool. It reflects recognition of what it takes to actually solve a problem rather than paper over it. The ComfyUI community, which now numbers in the millions, has been living with broken faces in generated images for a long time. Forbidden Vision is the first tool that approaches the problem with the seriousness it deserves.

Why This Matters

The broader significance of Forbidden Vision is what it demonstrates about where AI tooling is heading. For a long time, the assumption was that face correction required proprietary infrastructure, large teams, and significant compute budgets. luxdelux7 showed that a single focused developer, working with hand-curated data and purpose-built training, can produce something that outperforms general-purpose solutions on the specific problem he cared about.

It also works across the full range of content that ComfyUI users actually generate. Anime and realistic styles require different detection heuristics. Extreme poses break most segmentation masks. Forbidden Vision handles all of it because those cases were baked into the training process from the start, not treated as edge cases to be addressed later.

Getting Started

Forbidden Vision can be installed via ComfyUI Manager by searching "Forbidden Vision," or manually by cloning the repository into the custom_nodes directory. A complete example workflow is included in the repository, which makes it straightforward to see the full pipeline in action before adapting it to your own use case. The project is released under the GNU Affero General Public License v3.0, keeping it fully open source.

The models, the datasets, and the ongoing maintenance all take real time and resources to sustain. luxdelux7 has kept the tool free for the community and continues to iterate on it. If Forbidden Vision improves your workflow, supporting his work directly is what makes that continued development possible.

For a problem that has frustrated AI image creators since the beginning, it's fitting that the solution came not from a lab or a startup, but from someone who was simply tired of broken faces and decided to fix it.

Explore and Support Forbidden Vision

Find the full project, documentation, and installation instructions on the Forbidden Vision GitHub page.

If this tool has improved your workflow and you'd like to help luxdelux7 keep building, you can support him directly on Ko-fi.